As facial recognition systems continue to spread, so do concerns about their deployment

Facial recognition is hardly new – Privacy News Online has been writing about the topic for years now. But it is becoming more and more the norm, as some recent news shows. For example, the following story from Rolling Stone:

Taylor Swift fans mesmerized by rehearsal clips on a kiosk at her May 18th Rose Bowl show were unaware of one crucial detail: A facial-recognition camera inside the display was taking their photos. The images were being transferred to a Nashville “command post,” where they were cross-referenced with a database of hundreds of the pop star’s known stalkers

The honey-pot approach – offering something that is likely to attract people so that their faces can be scanned and compared with a watchlist – could easily be adapted for use in other situations. Although it’s a clever trick, the American Civil Liberties Union (ACLU)) points out that the technique raises a number of important issues.

For example, it seems that people were not told that a facial recognition system would be used in this way. The ACLU asks whether the images taken were saved, or shared with anyone else. Did security at the event use them to track individuals they regarded as “suspicious” at the concert? Facial recognition systems still have problems with accuracy, so that raises the question of how people who were flagged up as potentially a known stalker were approached and treated. Did the security people assume they were guilty until proven innocent, or did they carry out further checks? The ACLU points out that the approach adopted at Taylor Swift’s concert only works if there is an existing watchlist of people. That, in its turn, raises important questions about how such watchlists are created, whether they are fair or biased, and what can be done to be taken off them.

The ACLU also has concerns about a new Amazon patent that aims to spot “suspicious” individuals. As this blog reported earlier this year, Amazon is a major player in the facial recognition sector, and is keen for its Rekognition technology to be used by government departments, including the police. The new patent pairs facial recognition with products from Ring, a doorbell camera company that Amazon bought:

the application describes a system that the police can use to match the faces of people walking by a doorbell camera with a photo database of persons they deem “suspicious.” Likewise, homeowners can also add photos of “suspicious” people into the system and then the doorbell’s facial recognition program will scan anyone passing their home. In either case, if a match occurs, the person’s face can be automatically sent to law enforcement, and the police could arrive in minutes.

Amazon’s plans don’t stop there, according to the ACLU. The patent raises the possibility of equipping other devices with extended biometric detection capabilities. These might include fingerprints, skin-texture analysis, DNA, palm-vein analysis, hand geometry, iris recognition, odor/scent recognition, and behavioral characteristics, like typing rhythm, gait, and voice recognition.

Meanwhile, in the UK, the Metropolitan Police have been conducting trials of real-time facial recognition this week in central London. Once more the aim is to spot people on a watchlist, by scanning everyone that walks past special police vehicles during an eight-hour period. According to the police press release on the trials:

Anyone who declines to be scanned during the deployment will not be viewed as suspicious by police officers. There must be additional information available to support such a view.

If the technology generates an alert of a match, police officers on the ground will review it and further checks will be carried out to confirm the identity of the individual.

Those additional checks are vital: a study of UK police use of facial recognition, published by the privacy group Big Brother Watch earlier this year, found it alarmingly inaccurate. The report showed that the Metropolitan Police’s facial recognition matches were 98% wrong, misidentifying 95 people at last year’s Notting Hill Carnival as criminals. Subsequent trials were even worse, producing only false positives, and zero correct identifications.

Also worrying is the fact that South Wales Police store photos of all innocent people incorrectly matched by facial recognition for a year, without their permission or knowledge, which has resulted in a biometric database of over 2,400 innocent people. Following the release of its report, Big Brother Watch began a legal challenge to the use of facial recognition in the UK. A more recent report by academics at Cardiff University also found a wide range of problems with police deployment of the technology.

A number of experts have expressed serious concerns about the privacy implications of these kinds of police tests. For example, the UK’s Information Commissioner wrote:

how facial recognition technology is used in public spaces can be particularly intrusive. It’s a real step change in the way law-abiding people are monitored as they go about their daily lives. There is a lack of transparency about its use and is a real risk that the public safety benefits derived from the use of [facial recognition technology] will not be gained if public trust is not addressed.

Similarly, the UN Special Rapporteur on the right to privacy, Joseph Cannataci, described the use of facial recognition units in public as “chilling”, and said:

I find it difficult to see how the deployment of a technology that would potentially allow the identification of each single participant in a peaceful demonstration could possible pass the test of necessity and proportionality.

One company that has been particularly vocal about the risks of facial recognition technologies is Microsoft. Earlier this year, Privacy News Online wrote about the company’s call for regulation in this area. Microsoft’s Brad Smith has written a follow-up post calling for government action, and offering concrete suggestions for what form legislation should take, including the following:

To protect against the use of facial recognition to encroach on democratic freedoms, legislation should permit law enforcement agencies to use facial recognition to engage in ongoing surveillance of specified individuals in public spaces only when:

a court order has been obtained to permit the use of facial recognition services for this monitoring; or

where there is an emergency involving imminent danger or risk of death or serious physical injury to a person.

A new report from the AI Now Institute at New York University, published this month, agrees that legislation is urgently needed: “Facial recognition and affect recognition need stringent regulation to protect the public interest.” “Affect recognition” refers to claims that our faces exhibit micro-expressions that are “biomarkers of deceit“, as a recent blog post discussed. However, the AI Now Institute report warns: “These claims are not backed by robust scientific evidence, and are being applied in unethical and irresponsible ways that often recall the pseudosciences of phrenology and physiognomy.”

The field of facial recognition is moving fast, with novel and interesting applications appearing all the time. There is no doubt that potentially the technology could offer numerous benefits to society. The question is if it will, or whether it will simply become a pervasive instrument of surveillance by both companies and governments.

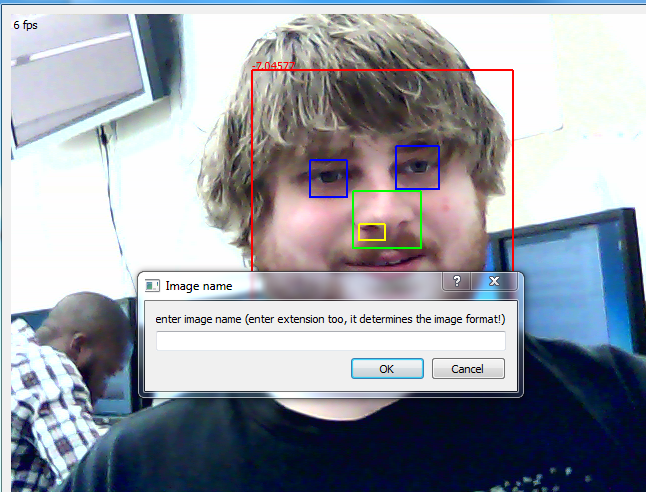

Feature image by Mbroemme5783.